Following Power BI AI best practices sounds reasonable enough... until you've spent an hour tracing why Copilot summarized the wrong metric. Most Power BI teams are using AI features they don't fully trust - they enable Copilot, run a Q&A query, try out Key Influencers, and get outputs that look plausible but don't match what they know about the business. So they stop using them, or keep using them cautiously and still verify everything manually, which kills the time savings entirely.

The tools aren't the problem. Copilot, Q&A, Key Influencers, Smart Narratives, Anomaly Detection - all of them can do genuinely useful things. But none of them work well if the semantic model underneath is messy, the outputs skip a governance review before reaching executives, and the findings stay trapped inside the dashboard.

These five best practices cover where each of those gaps actually lives.

The short version:

- Build your semantic model first: natural language field names, consistent measure definitions, Approved for Copilot designation

- Treat Copilot output as a first draft: verify measure, timeframe, segment, and business alignment before anything leaves your team

- Use AI visuals for statistical pattern detection: Key Influencers, Anomaly Detection, Decomposition Tree - for the pattern-finding work that would otherwise take a human analyst hours

- Set up governance before you scale: RLS verification, named reviewers, audit trails for exec-facing reports

- Close the last mile: get AI-generated findings into the decks and reports where decisions actually happen

- For teams still manually assembling Power BI exports into PowerPoint, Rollstack automates that delivery step, mapping live data to governed slide templates and distributing on schedule

What "AI in Power BI" actually covers

Power BI's AI features fall into three distinct buckets, and they work very differently from each other:

Copilot is the generative AI layer. You describe what you want in plain English, like "summarize this report," "build a page showing regional revenue trends," or "write a DAX measure for 90-day retention," and Copilot generates it. It also writes Smart Narratives: text summaries of your dashboard data that update automatically as data refreshes. This bucket requires Fabric F2+ or Premium P1+ capacity.

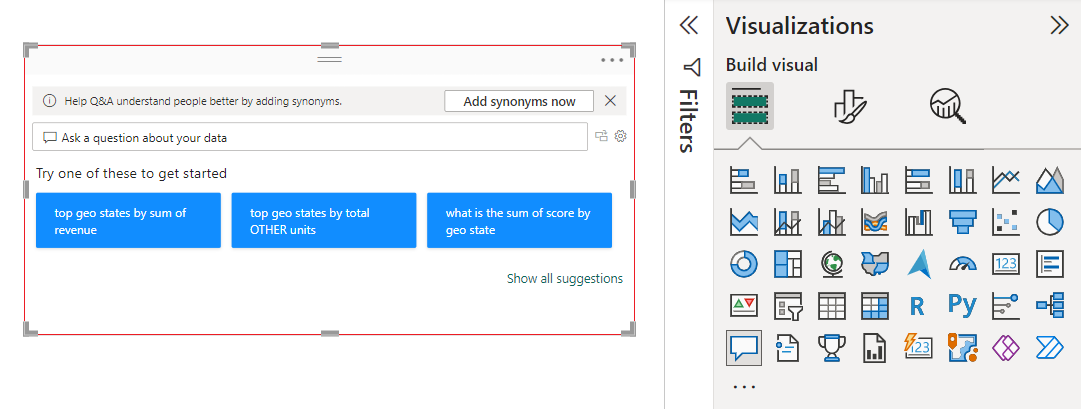

Q&A is a separate, older natural language feature that lets report consumers type questions directly into a dashboard ("what was revenue last quarter by region?") and get a visual answer, without Copilot. It works by mapping plain English to your model's field names and measure definitions, which is why cryptic field names break it. Available on Power BI Pro.

AI visuals like Key Influencers, Anomaly Detection, and Decomposition Tree are chart types that run statistical analysis automatically against your data. They don't generate text; they surface patterns, outliers, and drivers that would take a human analyst hours to find manually. Also available on Power BI Pro.

The best practices below apply across all three, but the prerequisites and failure modes differ, which is why "just turn on AI" rarely works.

Best practice #1: Build the semantic model your Power BI AI features need

Every Power BI AI feature runs against your semantic model. If the model is messy, the AI output will be wrong - often confidently wrong, which is worse than being obviously wrong.

Q&A breaks on cryptic field names. If your table has a column called DIM_CUST_EXTRCT_DT, Q&A has no idea that's "Customer Acquisition Date." Rename fields for natural language comprehension before you turn on AI features, and use Power BI's model view to add synonyms and field descriptions while you're at it. It's the cheapest, highest-ROI prep step for both Q&A and Copilot grounding. Time spent there pays off across every AI feature, not just Q&A.

Key Influencers has actual data requirements. The feature runs logistic or linear regression using ML.NET to identify statistical drivers. Per Microsoft's documentation, it generally needs at least 100 observations in the state you're analyzing and 10 in comparison states, running its full analysis on a sample of 10,000 rows. If you're pointing it at a filtered slice of a few hundred rows, treat the output skeptically.

Copilot inherits your measure definitions. When measures are inconsistently defined ("Revenue" in one table, "Net Revenue" in another, both supposedly measuring the same thing), Copilot surfaces inconsistent summaries. Audit your measures for naming consistency before enabling Copilot, and standardize the definitions your team actually uses.

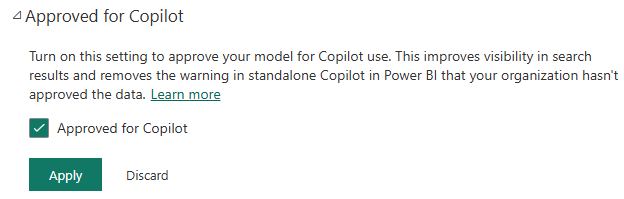

Microsoft Fabric has a formal mechanism for this: marking a semantic model as Approved for Copilot (previously called Prepped for AI). It's a governance signal that your model meets the quality bar for AI use, not just a checkbox. Treat reaching that designation as a first-class goal, not an afterthought. To mark a model:

- In the Power BI service, open the semantic model and select Settings

- Expand the Approved for Copilot section

- Check the box and select Apply

Changes typically take effect within an hour; up to 24 hours for models with many attached reports.

For a deeper guide to building well-structured Power BI semantic models, see Power BI Semantic Models.

Best practice #2: Build a review process for Copilot output

The most important Power BI AI best practice isn't a feature setting. It's a review process. Copilot can generate entire report pages from a text prompt, write contextual narrative summaries of dashboard data, and answer questions in natural language. On a clean semantic model with a clear data story, it saves real time.

But it requires a review discipline that most teams skip. Copilot knows your data schema but not your business, and that gap shows up in the output.

Before any Copilot-generated output leaves your team (particularly anything going into an exec presentation or client report), run through this quick check:

- Is Copilot using the right measure, or a similarly-named one from a different context?

- Do the dates match the reporting period your stakeholders expect?

- Is "enterprise customers" filtering on the right dimension?

- Does the output align with what you know happened in the business?

If the AI says revenue grew 40% and you know it was a flat quarter, something went wrong. Trace back through the prompt, the measure definition, and the model, and trust your business context over what the AI says.

If multiple analysts are using Copilot across reports, consider standardizing a short prompt template so outputs stay consistent: same time period framing, same metric definitions, same grain.

Getting better results from Copilot prompts

The model does most of the work, but prompt quality matters too. A few things that consistently improve output: specify the time period explicitly ("Q3 2024," not "recent"), define what the metric means in your context ("net revenue, excluding returns and discounts"), set the grain you want ("by region and customer segment"), and ask Copilot to include the measure names it used, which gives you something concrete to verify against the model. Use Copilot in the context of the report or semantic model you're working in rather than the standalone chat experience, and keep the prompt anchored to that specific dataset.

Licensing note: Copilot requires paid Fabric capacity (F2+) or Premium capacity (P1+); check current pricing. Per-user licensing (Pro or PPU) alone isn't enough. If your org is on Pro without Fabric or Premium, Copilot is unavailable, but Key Influencers, Q&A, Smart Narratives, and Anomaly Detection still work.

It sits downstream of Power BI: maps your live visuals to slide templates, runs the refresh on schedule, and distributes formatted decks to stakeholders automatically. SoFi cut their reporting cycle from 6 hours to 45 minutes doing exactly this.

Best practice #3: Use Power BI AI visuals where the machine has the advantage

Microsoft Power BI's AI features go beyond Copilot. Key Influencers, Anomaly Detection, Decomposition Tree, and Q&A are available across Power BI Pro and up (no Fabric or Premium license required), and on high-cardinality, high-row-count datasets, they surface things that would take a human analyst hours to find manually.

Key Influencers runs automated regression to identify which factors most drive a metric up or down. Churn analysis, deal velocity, support escalation - wherever you have the row counts to support it and a stakeholder who actually wants to know "why," this is where the feature earns its place. The output ("Contract Type and Tenure are the top influencers on churn") is the kind of answer that otherwise needs a data scientist to build a model. On thin slices of filtered data, the results get unreliable. Give it the full dataset, not a filtered slice.

Anomaly Detection flags statistical outliers in time-series charts automatically. Pair it with Power BI alerts so your team gets notified when a KPI hits anomalous territory rather than discovering it a week later in a dashboard review. As a passive visual it's just noise; connected to an alert, it's actually useful.

Decomposition Tree is chronically underused for root-cause analysis. When leadership asks "why did revenue drop in Q3?" it lets them drill down interactively across dimensions to find the answer, without needing a new ad-hoc report every time the question shifts.

Q&A Visual lets report consumers ask natural language questions directly on the page. How well it performs depends almost entirely on how well your model fields are named and described, which is why best practice #1 isn't optional if you want this one to work.

For a broader set of Power BI techniques, see 72 Power BI Tips for Advanced Users.

Best practice #4: Govern AI output before it reaches executives

AI-generated content introduces a governance problem that static dashboards don't have. A data label that's wrong is obviously wrong, but a confident narrative summary that misrepresents a metric trend can make it all the way to a board deck before anyone catches it.

The Approved for Copilot model marking is your first line of defense. In Power BI's semantic model settings, you can explicitly designate which models are ready for Copilot use. Approved models get clean responses in the standalone Copilot experience; non-approved models get warning caveats in the output indicating the data hasn't been vetted for AI use. Admins can also enable a separate toggle to restrict standalone Copilot to approved models only. For enterprise deployments where multiple teams publish models to the same workspace, marking models as approved should be the default, not an afterthought.

Row-level security applies to AI features, so users can only query the data they're authorized to see, and Copilot respects these restrictions when answering natural language questions. Verify this with a test user role in your specific setup rather than assuming it works as expected across all configurations.

For anything heading into exec-facing reports, someone needs to read AI-generated narratives before they leave the team - not "someone will probably check it," but an explicit step in the workflow, like any other editorial review. In practice, that means assigning a named reviewer for AI-generated content in high-stakes decks, the same way you'd assign a reviewer for any other external-facing communication. If nobody owns the review, nobody does it.

In regulated industries, apply the same audit trail standard to AI-generated report content that you'd apply to manually authored content: what was generated, from which model, reviewed by whom, and delivered to what audience.

Best practice #5: Get AI findings out of the dashboard with Rollstack

Power BI's AI features do their work inside the dashboard, but the executives who need to act on that analysis don't live in Power BI - they live in PowerPoint decks, email threads, and Slack. Getting a Copilot-generated Smart Narrative or an anomaly flag into a weekly leadership deck still means screenshots, copy-paste, and manual reformatting. The AI did its job. You're still doing yours.

Power BI does have native delivery options: report subscriptions send scheduled emails with PDF or PowerPoint snapshots, and you can export visuals directly. But these are static exports tied to whatever the report looked like at export time. They don't map to a slide template, they don't position content where the deck expects it, and they go stale the moment the underlying data refreshes. For a one-off report, that's manageable. For teams running recurring cycles across multiple stakeholders, it creates exactly the manual overhead that AI features were supposed to eliminate.

Rollstack connects your Power BI data to Powerpoint, Google Slides and more, making it easy to automate reporting updates. It maps your Power BI visuals to specific positions in a slide template and runs the refresh and export on a schedule, so one governed template can burst out to dozens of stakeholder or client decks automatically, each updated with live data, without anyone touching them manually. Version control, locked templates, and audit trails mean the output stays governed all the way through the handoff, not just inside the dashboard. And if your team has reviewed and approved a Copilot-generated Smart Narrative in Power BI, Rollstack carries it into the deck too, no copy-paste required. SoFi cut reporting cycle prep from 6 hours to 45 minutes using this approach with their BI stack.

For more on automating Power BI report delivery end-to-end, see How to Automate Power BI Reports and Power BI Scheduled Export.

The foundation of every Power BI AI best practice

The most common failure mode isn't the AI features themselves - it's teams that bolt them onto a messy model, get unreliable output, and conclude the features don't work. Clean field names, standardized measures, an Approved for Copilot designation: none of that is glamorous, but it's what separates teams who trust their AI outputs from teams who screenshot the dashboard and manually check it anyway.

If your team has the first four parts working and is still manually assembling PowerPoint decks from Power BI screenshots every week, that last-mile step is the only thing still costing you hours. See how Rollstack handles it

Frequently asked questions

What is Copilot in Power BI?

Copilot in Power BI is Microsoft's generative AI layer for report creation and analysis. You describe what you want in plain English ("build a page showing regional revenue trends," "summarize this report," "explain why this metric dropped") and Copilot generates report pages, writes narrative text summaries of your data, assists with DAX formula writing, and answers questions about report content. It requires paid Fabric capacity (F2+) or Power BI Premium (P1+) and must be enabled by your tenant admin.

How do I enable Copilot in Power BI?

Your tenant admin needs to turn on Copilot in the Microsoft Fabric admin portal under Tenant settings. Your organization also needs paid Fabric capacity (F2+) or Power BI Premium (P1+), and the capacity must be in a supported region. Once enabled at the tenant level, Copilot appears as a pane on the right side of reports in the Power BI service, and in Power BI Desktop if you have write access to a qualifying workspace.

Is Power BI Copilot secure?

Copilot respects your existing Power BI security model. Row-level security applies, so users only get answers based on data they're authorized to see. Microsoft processes Copilot prompts through Azure OpenAI and does not use your data to train its models. That said, verify your RLS configuration with a test user role, and in regulated industries treat AI-generated report content the same as any other externally-facing output: audit trails, named reviewers, documented delivery.

Can Power BI Copilot write DAX?

Yes. Copilot can generate DAX measures from plain English descriptions ("create a measure for 90-day rolling average of sales") and explain existing DAX code. Treat the output as a first draft: verify the logic against your model and test the results, since Copilot can produce syntactically valid DAX that still measures the wrong thing if the business context isn't clearly specified in the prompt.

Does Power BI have AI features without Copilot?

Yes. Key Influencers, Anomaly Detection, Decomposition Tree, Smart Narratives, and the Q&A visual are all available with a Power BI Pro license - no Fabric or Premium capacity required. Copilot is the only Power BI AI feature that requires organizational capacity. For a broader look at how AI is reshaping BI tools across the industry, see AI in Business Intelligence.

What license do you need for Copilot in Power BI?

A paid Microsoft Fabric capacity (F2 or higher) or Power BI Premium capacity (P1 or higher) - check current pricing. Power BI Pro and Premium Per User (PPU) don't qualify on their own. Beyond the license, your tenant admin needs to enable Copilot in Fabric settings, and your capacity region needs to be on Microsoft's supported list. If you have the right license but your region isn't supported, Copilot won't appear regardless.

Is Copilot available in Power BI Desktop?

Yes. You can use Copilot in Power BI Desktop to generate report pages, write DAX, and test prompts against your semantic model before publishing. To use the Copilot pane in Desktop, you need write access to a workspace on paid Fabric capacity or Power BI Premium where you plan to publish. Desktop is the right place to author and test; the consumption-side experience (business users asking questions about published reports) lives in the Power BI service.

Why does Copilot give wrong answers in Power BI?

Usually a semantic model problem, not a Copilot problem. If measures are inconsistently named, fields use internal codes instead of natural language names, or the model hasn't been marked Approved for Copilot, the output will be plausible-sounding but wrong. The fix is almost always in the model, not the prompt.

Ready to close the gap between your BI tool and the board deck?

Connect your BI tools directly to slides, docs, email templates and more. Leverage your existing dashboards to update 100s of presentations on a schedule, saving your team hours of manual work.

See it in action →

Ready to close the gap between your BI tool and the board deck?

See how finance teams automate the full delivery layer: from governed BI data to formatted, distributed board materials, without manual exports.